Machines In the Middle: A Review of Exploring AI in Applied Linguistics

Olesia Pavlenko, University of New Hampshire, New Hampshire, USA

From Efficiency to Equity: Reframing AI’s Role in Language Education

I approach this book not just as a researcher, but as a teacher who has had to rethink what learning looks like when machines begin to speak fluently. I did not expect AI to enter my ESL classroom so quickly. At first, it appeared quietly in student writing, with polished sentences that did not quite sound like the student who submitted them. Then AI appeared in their questions, their drafts, and their daily routines. What began as a novelty has become a regular part of how students approach language and learning.

As a teacher and teacher educator, I have had to adapt quickly while also asking what counts as meaningful learning when tools like ChatGPT are already part of the process. In my work with educators and students, I see a wide range of reactions. Teachers often value AI’s efficiency but worry about losing professional judgment. Students adopt these tools quickly, often without reflection. These responses point to a deeper uncertainty about AI’s place in language education and who defines what learning should look like in this changing landscape.

Exploring AI in Applied Linguistics, edited by Chapelle, Beckett, and Ranalli, addresses this moment of transition. Its fifteen chapters, drawn from the 2023 Technology for Second Language Learning (TSLL) Conference, are grouped into four sections: student use, assessment, research, and teacher education. The edited volume offers a timely account of how the field is responding to generative AI, inviting reflection rather than offering fixed answers. This review focuses on three themes that align with my teaching and professional development work: students’ strategic use of AI, AI in assessment, and teacher agency. Chapters centered on model performance or technical configurations are not included, as they fall outside the scope of classroom practice and the reflective purpose of this review.

Students at the Interface: Using AI Creatively, Not Passively

Chapters 2–4 offer a clear view of how students use AI across different school settings. Godwin-Jones et al. (Chapter 2) discuss how language learners use Grammarly and ChatGPT to support their writing, largely resolving issues on their own. Baumgart et al. (Chapter 3) show that students in English-medium courses used ChatGPT throughout the writing process, often skipping key steps such as reading and organizing their ideas. Kusumaningrum et al. (Chapter 4) focus on practical writing tasks, such as emails, showing how students relied on ChatGPT to produce polished messages but were inconsistent in checking or revising the output.

AI tools, including ChatGPT, offer new possibilities for supporting language learning, yet students often use them enthusiastically but with little reflection. Many polish essays, generate ideas, or seek quick answers without considering whether the output truly supports their learning goals. Because the tool is so easy to use, students may not engage critically with it. Their enthusiasm is promising, but it also highlights the need for structured guidance to help them develop reflective and ethical AI use.

This is why AI literacy belongs in language and writing instruction. Warschauer et al. (2023) show that while generative AI can help L2 writers express ideas and complete tasks more efficiently, it also raises concerns about overreliance, loss of voice, and ethics. My teaching confirms the need for clear guidance. Knowing how to use AI is not enough; students must also understand genres, assess outputs, and consider ethical implications. To exemplify, I often assign tasks where students compare AI- and human-written texts, reflect on revisions, and explain their choices. As AI becomes a regular part of language education, teaching students to use it wisely is essential for developing responsible, independent writers.

While these chapters foreground student agency and experimentation, the next group of studies shifts the focus to how AI is being evaluated and entrusted with the complex work of assessment.

When AI Scores (But Should It?)

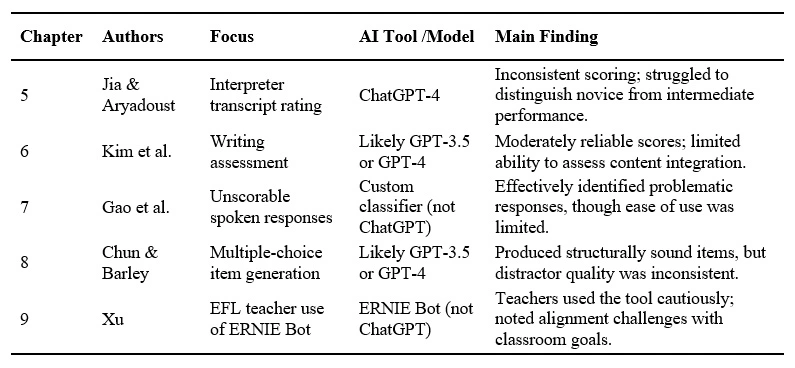

AI is entering language assessment, a high-stakes domain where scoring complexity and ethical concerns demand attention (Harding et al., 2015). In my own teaching and professional development work, I have seen the promise of generative AI tools, particularly in saving time on low-stakes feedback. At the same time, I have observed how easily these tools can be overtrusted. Chapters 5 through 9 highlight this ongoing tension across a range of assessment contexts, models, and findings. Table 1 summarizes key insights from these chapters, offering a comparative view of how AI is being tested, trusted, and critiqued in classroom-relevant settings.

Across Chapters 5–9, results vary considerably depending on the AI model, prompt calibration, and rubric clarity. Chapter 5 evaluates ChatGPT-4 for interpreting assessment using a calibrated prompt and a detailed rating scale, while Chapter 6 examines ChatGPT’s essay-scoring performance without full calibration. Chapter 8 employs GPT-3.5 to generate multiple-choice questions guided by Haladyna’s (2004) rubric, and Chapter 9 investigates teachers’ use of ERNIE Bot to assess writing. This range shows that “AI” in assessment encompasses diverse and rapidly evolving systems. Studies with well-calibrated prompts and explicit rubrics (Chs. 5 and 8) produced more consistent scoring, whereas those using default or loosely structured inputs (Chs. 6 and 9) yielded more variable results. These methodological differences likely contributed to divergent outcomes and highlight the need for clearer documentation of models, prompts, and rubrics. Taken together, these findings point to continuing questions about reliability, transparency, and accountability in AI-based assessment. As experimenting with AI becomes increasingly common in language education, the question remains: how are educators responding to this shifting landscape, and what kinds of professional development can help them integrate AI in ways that support both learning and professional judgment?

Table 1

Patterns and Findings Across Chapters 5–9

AI and the Teacher's Voice

In my work with teachers and tutors, I have seen AI elicit responses ranging from curiosity to resistance. Teachers already manage countless responsibilities and are now expected to use tools they never asked for. Some worry AI may diminish their judgment; others want to try it but do not know where to start. When teachers test these tools, doubt often shifts to curiosity. They evaluate AI more confidently when given time. These varied responses, moving from hesitation to exploration, reflect a pattern I continue to observe in professional development settings.

Chapelle et al. (Chapter 13) describe patterns similar to those I observed during a recent professional development workshop, where teachers experimented with ChatGPT for lesson planning, classroom materials, and student responses. Each brought a distinct perspective, revealing both the tool’s potential and its limitations in instructional contexts. Throughout, teachers remained critical, consistently asking whether AI supported student learning. Kostka and Toncelli’s (2023) study reflects the same range of responses: some teachers embraced ChatGPT, while others raised concerns about reliability, workload, and ethical alignment. Chapter 14 (Compagnoni) extends this discussion by focusing on AI in immersive virtual reality environments, where the technical and cognitive challenges further highlight the need for sustained support alongside innovation. Taken together, these findings and my own observations suggest that professional development must move beyond tool demonstrations and should create space for critical reflection on AI’s role in teaching.

These chapters reinforce what I have consistently observed: successful AI use in education depends less on the sophistication of the tool and more on teacher autonomy. Professional development must provide room for both exploration and critical inquiry. When teacher agency is prioritized, AI becomes a resource teachers can shape to reflect their values and their students’ needs.

Gaps and Future Directions

While Exploring AI in Applied Linguistics offers valuable insights, most chapters focus on ChatGPT, with comparatively limited discussion of other AI models or platforms. Although Chapter 9 (Xu) includes ERNIE Bot and Chapter 11 (Zhou and Li) briefly references Microsoft Copilot, the volume engages less with tools such as Gemini, DeepSeek, or You.com. Learner-facing platforms like Duolingo Max are also not explored in depth. As the field of AI continues to expand, there is a growing need for independent, practice-oriented reviews that assess the effectiveness, accessibility, and linguistic relevance of a wider range of technologies. The volume might also be strengthened by more sustained attention to student perspectives, not only as users but as active contributors to how AI is implemented in language learning contexts.

Beyond these practical gaps, several ethical and methodological questions also warrant further exploration. Can we explain how AI systems reach their decisions? Why do outputs differ across platforms and tasks? Whose norms shape these outputs? To what extent do we risk reinforcing bias by outsourcing evaluative judgment to opaque computational processes? Addressing these questions will be essential for ensuring transparency, fairness, and interpretability in future AI-based assessment research.

In addition to these research priorities, the increasing presence of AI in classrooms invites reflection on how we define effective learning. As machines support writing, provide feedback, and assist with grading, it becomes essential to consider what constitutes authentic engagement and meaningful progress. The implications for school policies, particularly those related to authorship, evaluation, and academic integrity, are still developing. Recent research suggests that even the most advanced AI writing tools require teacher interpretation and pedagogical framing, since technology alone cannot support learners in the nuanced ways that educators can (Barrett & Pack, 2023). Balancing automation with human insight will be important as AI becomes more embedded in teaching and learning.

Pedagogy in the Loop

The timely book Exploring AI in Applied Linguistics shows how AI is reshaping language teaching. Rather than offering quick solutions, it invites thoughtful reflection, balancing innovation with care. Across testing, teacher training, and student learning, the research makes clear that what matters is not the tool itself but how we use it. Teachers should focus less on mastering new tools and more on maintaining sound pedagogy. Our task is to help students learn language, not to rely on machines. We share a responsibility to ensure that AI enhances learning rather than hindering it. Teaching must serve all students, not only the tech-confident. Ultimately, real learning should guide AI use, not novelty.

References

Barrett, A., & Pack, A. (2023). Not quite eye to AI: Student and teacher perspectives on the use of generative artificial intelligence in the writing process. International Journal of Educational Technology in Higher Education, 20(1), Article 59 (2023). https://doi.org/10.1186/s41239-023-00427-0

Chapelle, C. A., Beckett, G. H., & Ranalli, J. (Eds.). (2024). Exploring AI in applied linguistics. Iowa State University Digital Press. https://doi.org/10.31274/isudp.2024.154

Godwin-Jones, R. (2022). Partnering with AI: Intelligent writing assistance and instructed language learning. Language Learning & Technology, 26(2), 5–24. https://doi.org/10.64152/10125/73474

Harding, L., Alderson, J. C., &Brunfaut, T. (2015). Diagnostic assessment of reading and listening in a second or foreign language: Elaborating on diagnostic principles. Language Testing, 32(3), 317–336. https://doi.org/10.1177/0265532214564505

Kostka, I., & Toncelli, R. (2023). Exploring applications of ChatGPT to English language teaching: Opportunities, challenges, and recommendations. TESL-EJ, 27(3), 1–19. https://doi.org/10.55593/ej.27107int

Warschauer, M., Tseng, W., Yim, S., Webster, T., Jacob, S., Du, Q., & Tate, T. (2023). The affordances and contradictions of AI-generated text for writers of English as a second or foreign language. Journal of Second Language Writing, 62, Article 101071. https://doi.org/10.1016/j.jslw.2023.101071

Olesia Pavlenko is a Ph.D. student in Education at the University of New Hampshire. Her research focuses on the role of artificial intelligence and multimodal technologies in language learning and literacy. She also teaches courses on linguistic diversity and supports teacher professional development in multilingual and technology-enhanced classrooms.

Olesia Pavlenko is a Ph.D. student in Education at the University of New Hampshire. Her research focuses on the role of artificial intelligence and multimodal technologies in language learning and literacy. She also teaches courses on linguistic diversity and supports teacher professional development in multilingual and technology-enhanced classrooms.