(How) Can I Tell If ChatGPT Wrote My Students’ Assignments?

Sebnem Kurt, Shuhui Yin, Danilo Calle Londono, Hwee Jean Lim, In Young Na, Nergis Danis, Carol A. Chapelle, Iowa State University, Ames, USA

Language teachers are among the professionals wanting to know how to distinguish language generated by AI tools from discourse produced by humans. When students submit computer-generated text instead of their own writing, they lose the opportunity to learn how to write. Therefore, teachers typically want to be able to identify students who need to be encouraged to write their own assignments. The widespread interest in machine-generated text has arisen after many years of research because at last researchers have succeeded in producing machine-generated language that passes the Turing test. The Turing test refers to the challenge Turing set for scientists in the 1950s to create a machine that could feign humanity so well that it would escape detection by a human interlocutor, the interrogator, in Turing’s imitation game. The most suitable test of humanity, in Turning’s view, was the linguistic responses that the machine would give to the interrogator’s questions. The imitation game, Turing’s thought experiment, has gone live today. Language teachers have become Turing’s interrogators, questioning whether their students’ responses to assignments consist of language generated by AI or discourse the students produced.

Some teachers claim to be able to tell the difference between text produced by AI and students, but the research investigating authorship detection paints a murkier picture, finding that teachers judge authorship correctly in about 70% of cases at best when asked to distinguish between texts authored by humans and those generated by machine (e.g., Alexander et al., 2023; Fleckenstein, et al., 2024; Waltzer et al., 2024). Even judges who report a high degree of confidence are not necessarily accurate (e.g., Waltzer et al., 2024). Moreover, teachers tend to use error as an indicator of the student writer to make authorship judgments (Alexander et al., 2023). Such a deficit model of student writers—positioning them as flawed versions of automated text generators—may be a good strategy for an interrogator but not for a teacher. Previous researchers recommended that, rather than teaching students to include grammatical errors in their texts, teachers should learn strategies for identifying AI-generated texts. Intending to discover such strategies, we designed a modern imitation game beginning with three teachers and a collection of texts containing half produced by students and the other half by ChatGPT-4o.

Our Imitation Game

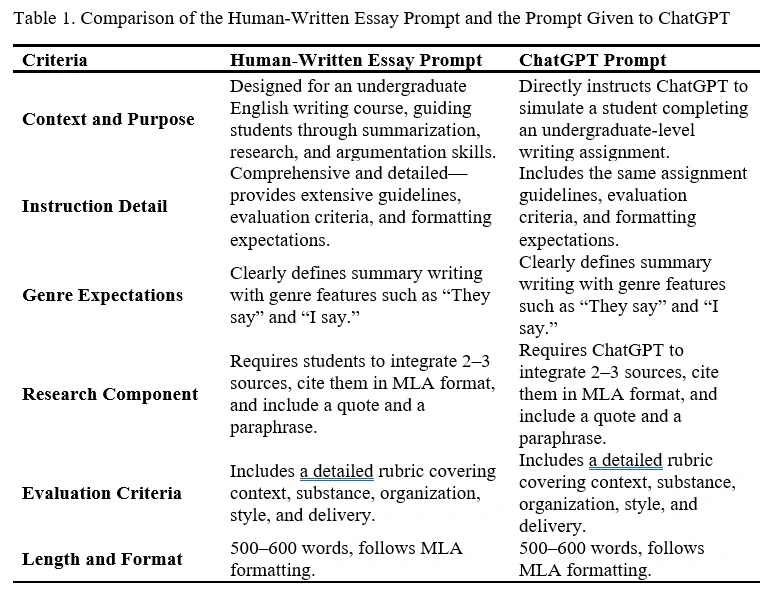

This study employs a mixed-methods approach to explore the language characteristics of student-authored and AI-generated language that influence teachers’ judgments about authorship. One part of the quantitative strand was a corpus analysis of 60 texts—30 written by students prior to the public release of ChatGPT (Anders, 2022), and 30 generated by ChatGPT-4o in response to a prompt. The prompts asked the students to write and instructed ChatGPT to generate a summary of an article from The New York Times. The prompt prepared for ChatGPT was carefully designed to provide sufficient context and details to allow it to generate essays reflecting the assignment guidelines that students had received, as summarized in Table 1. The corpus analysis (Biber, 1988) identified key lexicogrammatical features (Egbert & Biber, 2023) that distinguished between AI-generated and human-authored texts. The other part of the quantitative strand gathered the teachers’ authorship judgments on 30 (15 student-authored and 15 computer-generated) of the texts individually and their estimates of their level of confidence (from zero to 100) for each judgment directly afterward. On average, the teachers, who had all taught the writing course in the past, achieved a level of 82% accuracy and reported a 92% level of confidence.

Qualitative data were gathered during the judgment task as teachers narrated their thought processes while formulating their opinions of who had authored each text. The three teachers’ think-aloud sessions were recorded and transcribed, and then the teachers participated in a focus group discussion. Taking a closer look at two essays, a student-authored one and a ChatGPT-generated one, we carried out a qualitative functional linguistic analysis. By triangulating quantitative and qualitative data, we identified the following key strategies.

Key Strategies

Our triangulated interpretation from the teachers’ focus group discussion, functional linguistic analysis of the pair of example papers, and quantitative analysis revealed systematic and explainable differences between papers written by the students and those generated by ChatGPT-4o. We found correct authorship decisions relied on two sets of strategies, one associated with the language in the papers, and the other with the teachers’ knowledge of the students and assignments.

Clues in Meaning and Lexicogrammar

Teachers’ comments touched on aspects of the language spanning functional meaning and lexicogrammar which are illustrated through the functional analysis in Table 2. One successful strategy teachers identified was, as Teacher 1 put it, looking for instances of “expressing personal experiences, communicating in a very human way, using pronouns, and referring to specific ideas.” Illustrations appearing throughout the example student paper include the rhetorical questions and the second-person pronouns in Table 2, Row 1.

Table 2. Examples of Grammatical Differences in how Functional Meaning is Expressed in Writing by a Student and by ChatGPT

|

Functional Meaning |

Student Writing |

ChatGPT Writing |

Grammatical Differences |

|

1. Attract readers’ interest and attention (first sentence) |

“What if drinking alcohol in moderation had benefits? Would you ignore the potential risks?” |

“In her article ‘America Has a Drinking Problem,’ Kate Julian explores… |

Student: Uses rhetorical questions ChatGPT: Uses no rhetorical questions |

|

2. Introduce the main idea to be summarized in the paper (stated in the first paragraph) |

“…the author argues that alcohol in moderation presents benefits to people, alongside the obvious detriments.” |

“Kate Julian explores the complex relationship between alcohol consumption and American society.” |

Student: Depicts the original article as a two-sided issue that the author (Julian) argues exists. Uses the “[person] argues” pattern ChatGPT: Depicts the original article as an exploration of an existing social phenomenon to be explored. Uses the “[person] explores” pattern |

|

3. Elaborate on the author’s treatment of the topic (stated in the first paragraph) |

“The article states that alcohol, in smaller and controlled amounts, can loosen creative thinking and make us more sociable and open to sharing and spreading ideas.” |

“Julian discusses the historical and cultural significance of drinking in America, from the Pilgrims’ early dependence on beer to modern concerns about excessive alcohol use. |

Student: Elaborates on the topic using verbs to express what alcohol can do (loosen creative thinking and make us more sociable) Uses first-person “us” to include readers in the topic ChatGPT: Elaborates on the topic using complex noun phrases (“the historical and cultural significance of drinking in America”) |

|

4. Focus the topic on Americans |

“… Julian talks about Americans' tendencies to drink too much, as well as about the possible benefits that drinking more moderately could bring us.” |

“She highlights how Americans have historically oscillated between overindulgence and abstinence, resulting in a cycle of binge drinking followed by renunciation.” |

Student: Uses a less formal variation on the “[person] argues” pattern: “[person] talks about.” Uses first-person “us.” ChatGPT: Uses a variation on the “[person] explores” pattern with the verb “highlight.” Uses low frequency verbs (“oscillated”) and nominalizations (“overindulgence,” “abstinence,” and “renunciation”). |

|

5. Support topic by making reference to an expert |

“As Julian quotes Edward Slingerland, a professor at the University of British Columbia… The author believes that alcohol has benefits when it comes to the flow of ideas and sociability.” |

“Julian delves into the reasons for these trends, drawing on the work of scholars like Edward Slingerland, who theorizes that alcohol plays a key role in social bonding and creativity.” |

Student: Reports on a quote from the expert as the basis for Julian’s belief (“the author believes that”). “Believe” is a verb that expresses personal feelings. ChatGPT: Presents the expert’s work as the basis for understanding the social phenomenon of alcohol consumption and paraphrases it in a noun phrase object to the verb “theorize,” which expresses analytic thinking, not personal feelings. |

|

6. Support topic with statistics |

“As most of us know, alcohol abuse is a serious issue in the United States, so is there a possibility of us being able to drink moderately? Well, that's part of the problem; as Julain puts it, “From 1999 to 2017, the number of alcohol-related deaths in the U.S. doubled. (Julian)” |

“…the pandemic accelerated problematic drinking behaviors, particularly solitary drinking... For example, statistics show that alcohol-related deaths in the U.S. doubled from 1999 to 2017, with rates likely rising further during the pandemic (Julian, 2021).” |

Student: Uses statistics to support common knowledge (“as most of us know”) and as evidence pertaining to the rhetorical question: “is there a possibility of us being able to drink moderately?” First-person “us” appears in both constructions. ChatGPT: Uses statistics to support the previous statement about a historical trend (“the pandemic accelerated problematic drinking behaviors”). |

|

7. Introduce an additional source |

“All of this leads into the next article and the primary question, is alcohol in moderate amounts healthy? In his article about the risks and benefits of moderate alcohol consumption…” |

“An additional source supports Julian’s claims about the cultural and psychological impacts of alcohol. In The Journal of American College Health, researchers found…” |

Student: Cohesion marked with reference to “All of this” meaning previous discussion in the paper. ChatGPT: Cohesion marked with reference to the main argument identified in the original article: “An additional source supports Julian’s claims about the cultural and psychological impacts of alcohol.” |

|

8. Conclude with overview statement |

“In conclusion, at least as of now, the evidence to support moderate alcohol consumption being healthy just isn’t there. In my opinion, and more than likely the opinion of many others, more research…” |

“In conclusion, Julian’s article provides a compelling overview of America’s complicated relationship with alcohol.” |

Student: Concludes with conversational expression “just isn’t there” and supports with writer’s opinion which is connected to the opinion of others “In my opinion, and more than likely the opinion of many others” ChatGPT: Concludes with a claim about the author’s success in providing an overview of the social phenomenon “America’s complicated relationship with alcohol.” |

In contrast to the personal, human, and specific language in the students’ writing, teachers saw ChatGPT’s writing as more general. For example, they described the conclusions in the papers as ending “with a grand, overarching commentary on societal values. Humans, on the other hand, wrap things up with more personal reflections—like lessons learned or how it relates to their own lives” (Teacher 2). Table 2, Row 8 illustrates the distinction: the student concludes with reference to the current time, “at least as of now” and “In my opinion”; ChatGPT concludes with the “compelling overview of America’s complicated relationship with alcohol.” The quantitative corpus analysis supported the distinction identified by the teachers and illustrated in the example—more personal language (such as second-person pronouns) appears in students’ writing across all papers.

Another salient feature was the “sophisticated words” and specifically “nominalizations” in ChatGPT’s papers (Mizumoto et al., 2024; Zhou et al., 2023). In support, the quantitative analysis showed that ChatGPT’s papers included longer words, more nouns and nominalizations, and other features connected to noun phrases. The functional analysis shows how the groundwork for vocabulary choices was established at the beginning of the paper where ChatGPT frames the topic broadly as “the complex relationship between alcohol consumption and American society.” The students’ topic in contrast is “benefits” and “detriments” of alcohol—a topic accessible to the author for comment by building on the readers’ presumed prior knowledge. With their respective stages set in the introduction, even though the two papers perform the same functions, they do so differently, as shown throughout the examples in Table 2.

Knowledge of the Students and the Assignment

Teachers drew on their familiarity with students’ writing practices and the typical progression of assignments in the course to make judgments about authorship. In many cases, they were able to recognize the voice of the students reflected in the language choices described above. For example, the teachers commented that “students want to show themselves as the authors” (Teacher 1) through the use of first-person pronouns to connect the topic to their own experiences and perspectives. Teachers reported that they evaluated whether the writing reflected personal experiences, rhetorical choices, and stylistic elements typically associated with students’ unique voices. They found AI-generated essays formal and informational in contrast to student-written essays that communicated more personally and contextually with vocabulary that one might find in spoken language.

Referring to her past experience, Teacher 2 explained, “I also remembered the types of activities we got students to do before writing each major assignment in each module and how they built their essays by building up from what they had written in those exercise activities and then putting things together.” This familiarity with the incremental nature of the course assignments allowed teachers to identify patterns consistent with student-generated texts. In addition, knowledge of the assignment guidelines is of help in judging authorship. As Teacher 3 noted, “I found one student’s writing that obviously used ChatGPT. It didn’t follow the assignment requirements at all. I also based my judgment on using those assignment guidelines and requirements that I remember from those classes to judge whether it’s an AI- or human-generated text.” Teachers emphasized that even though ChatGPT produces grammatically perfect responses, its output often lacks alignment with the expectations of the assignment. This misalignment provided a clue despite the fact that ChatGPT was prompted with the same assignment guidelines that were given to students.

In short, the teachers’ knowledge of the students’ abilities, the course content, and the assignment guidelines allowed them to insightfully interpret the language in the texts and was critical to their success in distinguishing between AI-generated and human-authored texts.

The Take-Away

As teachers unwittingly take on the role of interrogator in language classrooms throughout the world, we recommend that they work to develop their own GenAI literacy for English language teaching, which includes developing strategies for detecting likely cases of GenAI in written assignments. Our research points out how teachers can leverage their own knowledge of their students and their assignment demands as well as their language analysis skills to better understand the language generated by AI tools like ChatGPT. The teachers in our study used their experience with the types of students who take the writing course where the papers were written as well as the writing assignment itself. This level and type of familiarity gave them an advantage not seen in other studies where the human judges did not necessarily have a strong connection to the course and specifics of the assignment even if they had experience in teaching writing (e.g., Alexander et al., 2023). Teachers are also able to examine texts analytically to identify features such as the interpersonal language of questions and first and second pronouns as well as the informational language of adjectives and nominalizations. With these insights, teachers can work together to generate texts based on the writing assignments they give to students and the prompts they prepare for a GenAI tool to develop their skills in identifying distinguishing features of text produced by students and those generated by computers.

Acknowledgement

The corpus used in the study was created by Dr. Abram Anders, Principal Investigator of IRB Exempt study 20-418-00. The artifacts included in this corpus were created by students who gave informed consent for both primary and secondary research studies based on de-identified data.

References

Alexander, K., Savvidou, C., & Alexander, C. (2023). Who wrote this essay? Detecting AI-generated writing in second language education in higher education. Teaching English with Technology, 23(2), 25–43. https://doi.org/10.56297/BUKA4060/XHLD5365

Anders, A. (2022). ISUComm Pilot Corpus - Spring 2022.

Biber, D. (1988). Variation across speech and writing. Cambridge University Press. https://doi.org/10.1017/CBO9780511621024

Egbert, J., & Biber, D. (2023). Key feature analysis: A simple, yet powerful method for comparing text varieties. Corpora, 18(1), 121–133. https://doi.org/10.3366/cor.2023.0275

Fleckenstein, J., Meyer, J., Jansen, T., Keller, S. D., Köller, O., & Möller, J. (2024). Do teachers spot AI? Evaluating the detectability of AI-generated texts among student essays. Computers and Education: Artificial Intelligence, 6, Article 100209. https://doi.org/10.1016/j.caeai.2024.100209

Mizumoto, A., Yasuda, S., & Tamura, Y. (2024). Identifying ChatGPT-generated texts in EFL students’ writing: Through comparative analysis of linguistic fingerprints. Applied Corpus Linguistics, 4(3), Article 100106. https://doi.org/10.1016/j.acorp.2024.100106

Zhou, T., Cao, S., Zhou, S., Zhang, Y., & He, A. (2023). Chinese intermediate English learners outdid ChatGPT in deep cohesion: Evidence from English narrative writing. System, 118, Article 103141. https://doi.org/10.1016/j.system.2023.103141

Waltzer, T., Pilegard, C., & Heyman, G. D. (2024). Can you spot the bot? Identifying AI-generated writing in college essays. International Journal for Educational Integrity, 20, Article 11. https://doi.org/10.1007/s40979-024-00158-3

Sebnem Kurt is a PhD candidate in the Applied Linguistics and Technology program at Iowa State University. She is a former Fulbright scholar and a TESOL certificate holder from Idaho State University. Her research interests include technology-assisted language learning and assessment, as well as online teacher training. Her most recent publication about the impact of contextual factors on international English teachers’ TPACK can be found in the Journal of Research on Technology in Education.

Sebnem Kurt is a PhD candidate in the Applied Linguistics and Technology program at Iowa State University. She is a former Fulbright scholar and a TESOL certificate holder from Idaho State University. Her research interests include technology-assisted language learning and assessment, as well as online teacher training. Her most recent publication about the impact of contextual factors on international English teachers’ TPACK can be found in the Journal of Research on Technology in Education.

Shuhui Yin is currently a Ph.D. student in the Applied Linguistics and Technology program at Iowa State University. Her primary research interests are corpus-informed research in second language writing, language assessment and technology, and English for Academic Purposes.

Shuhui Yin is currently a Ph.D. student in the Applied Linguistics and Technology program at Iowa State University. Her primary research interests are corpus-informed research in second language writing, language assessment and technology, and English for Academic Purposes.

Danilo Calle is currently a student in the TESL/Applied Linguistics Master’s program at Iowa State University. His research interests integrate his enthusiasm for artificial intelligence in education with the development of linguistic identity through language teaching. Although Danilo is starting on his academic path, he has already set a strong interest in researching the impact of artificial intelligence tools and digital spaces on the language development of ESL and EFL students.

Danilo Calle is currently a student in the TESL/Applied Linguistics Master’s program at Iowa State University. His research interests integrate his enthusiasm for artificial intelligence in education with the development of linguistic identity through language teaching. Although Danilo is starting on his academic path, he has already set a strong interest in researching the impact of artificial intelligence tools and digital spaces on the language development of ESL and EFL students.

Hwee Jean Lim is a Ph.D. student in Applied Linguistics and Technology, co-majoring in Human-Computer Interaction. She is currently an instructor for a Global Online Course, Teaching English Academic Writing to Speakers of Other Languages, and a member of the Online Learning Team (OLT). Her research focuses on the intersection of language learning and technology, specifically Computer-Assisted Language Learning (CALL) and the integration of digital tools in various aspects of language education, including instruction and evaluation practices.

Hwee Jean Lim is a Ph.D. student in Applied Linguistics and Technology, co-majoring in Human-Computer Interaction. She is currently an instructor for a Global Online Course, Teaching English Academic Writing to Speakers of Other Languages, and a member of the Online Learning Team (OLT). Her research focuses on the intersection of language learning and technology, specifically Computer-Assisted Language Learning (CALL) and the integration of digital tools in various aspects of language education, including instruction and evaluation practices.

Inyoung Na is a Ph.D. student in Applied Linguistics and Technology program at Iowa State University. Her research interests include second language pronunciation, speech intelligibility, and the integration of artificial intelligence technologies in language testing and assessment. She is currently focused on investigating how learners interact with generative AI tools like ChatGPT for language learning and writing.

Inyoung Na is a Ph.D. student in Applied Linguistics and Technology program at Iowa State University. Her research interests include second language pronunciation, speech intelligibility, and the integration of artificial intelligence technologies in language testing and assessment. She is currently focused on investigating how learners interact with generative AI tools like ChatGPT for language learning and writing.

Nergis Danis is a PhD candidate in Applied Linguistics and Technology at Iowa State University, specializing in corpus linguistics and its application to language learning, particularly academic writing. Her research focuses on disciplinary variation and register analysis in academic writing, with publications in journals like English for Specific Purposes and the International Journal of Applied Linguistics.

Nergis Danis is a PhD candidate in Applied Linguistics and Technology at Iowa State University, specializing in corpus linguistics and its application to language learning, particularly academic writing. Her research focuses on disciplinary variation and register analysis in academic writing, with publications in journals like English for Specific Purposes and the International Journal of Applied Linguistics.

Carol A. Chapelle is Distinguished Professor and LAS Dean’s Professor at Iowa State University in the Applied Linguistics and Technology program. Her research investigates second language teaching, learning, and assessment with an emphasis on evaluation methods and the use of technology. Her recent books include Exploring Artificial Intelligence in Applied Linguistics (Iowa State University Digital Press, 2024; with G. Beckett, & J. Ranalli, 2024).

Carol A. Chapelle is Distinguished Professor and LAS Dean’s Professor at Iowa State University in the Applied Linguistics and Technology program. Her research investigates second language teaching, learning, and assessment with an emphasis on evaluation methods and the use of technology. Her recent books include Exploring Artificial Intelligence in Applied Linguistics (Iowa State University Digital Press, 2024; with G. Beckett, & J. Ranalli, 2024).