Book Review of “Local Language Testing: Design, Implementation, and Development”

Newton Paulo Monteiro, Centro Universitário Alfredo Nasser, Brazil

Understanding Local Language Testing

Classroom-based assessments and large-scale tests are an essential part of the routine at schools, universities, and language centers. It is no wonder, therefore, that these are the first examples to come to mind whenever one thinks of assessment. Besides the said scenario among common stakeholders, most language testers are also likely to recall these examples, as evidenced by the focus of much of the academic and professional assessment literature.

In a different vein, Slobodanka Dimova, Xun Yan, and April Ginther gifted us with their book Local Language Testing: Design, Implementation, and Development, an invaluable source of guidance filling the gap between classroom and large-scale assessment, in 2020. This 9-chapter book outlines the steps to develop local tests from planning to the use of test scores. The first two chapters provide definitions and a rationale for local test development. In Chapter 1, the authors state that a local test is “one whose development is designed to represent the values and priorities within a local instructional program and designed to address problems that emerge out of a need within the local context in which the test will be used” (p. 1). They also highlight the potential of local tests for problem-solving, since different contexts face specific teaching, administrative, and assessment problems that can only be addressed by local tests. Chapter 2 discusses the role of local tests for diagnosis purposes, linking test purposes to instructional outcomes and language assessment literacy. Right from the introduction, Dimova and colleagues clarify important yet, hard-to-understand, terminology with several references to their local projects in the U.S. and Denmark.

From Chapter 3 to Chapter 7, the authors discuss the process of test development, from conception to delivery. This is the core for anyone searching for guidance on test development. Chapter 3 raises practicality issues related to cost, material resources, human resources, testing roles, and the important issue of test and task design through specifications. It highlights the need for defining purpose and underscores the role of manuals for different stakeholders. While Chapter 4 describes the types of tasks to assess different skills, Chapter 5 presents the options for test delivery (paper, digital, and online) and points out potential communication problems with IT professionals during delivery system development.

Chapters 6 and 7 address the assessment of productive skills (speaking, writing) through scaling and rater training. Specifically, Chapter 6 discusses different scales (analytic, holistic, primary trait) and scale design methods (data-driven and theory-driven). It also presents benchmarking (identification of representative performances) as an approach for performance assessment. Chapter 7 explores the methods for rater selection, training, and normalizing, supplementing it with some recommendations on statistical analyses for test reliability.

Chapter 8 describes the treatment of test data. It urges readers to have a clear view of the kind of data they will need in their assessment projects. For example, should testers consider only test scores, or might information, such as students’ age, sex, ethnicity, or language background, be provided for richer data analysis? Such decisions should be taken early in the planning phase of assessment projects.

Chapter 9 is dedicated to the authors' reflections on their experiences in developing and managing local tests. They make it clear that assessment projects are collaborative rather than a one-man’s achievement. They also highlight that conducting assessment projects may be a challenge since local test developers need to juggle many professional activities at the same time, but it is a worthwhile source of learning and information for language programs. The final pages provide an appendix with model documents for student assessment, feedback, and self-assessment.

Contextualizing Local Language Testing

In my view, there are three important lessons from the book: the role of communities of practice, the role of local expertise, and the view of validation. They are particularly exciting due to the experiences and challenges I have had in my work context in higher education. My professional duties currently comprise administrative activities in the evaluation of higher education programs, policies, and facilities. I am also in charge of an online general education and Portuguese exam and of an exam of EAP/ESP reading for medical students. In addition, I have a research project on assessing reading for primary students in the neighboring community.

Throughout its 211 pages, Dimova and colleagues emphasize that local tests provide learning opportunities for all stakeholders. They argue that a community of practice can result from continuous collaborative work. This is especially clear in Chapter 7, where the authors discuss rater training, even presenting an example of an emerging community of practice in the context of one of the exams. This is a remarkably interesting insight. In my context, I have realized that assessment knowledge is distributed in many stakeholders’ minds. Making the best out of it is a matter of working collaboratively. Even if there are few or no specialists, a lot can be done by considering everyone’s contribution. Unfortunately, except for some mentions and examples, the authors do not demonstrate how to foster communities of practice and discuss how individuals differ in engagement with the community’s activities.

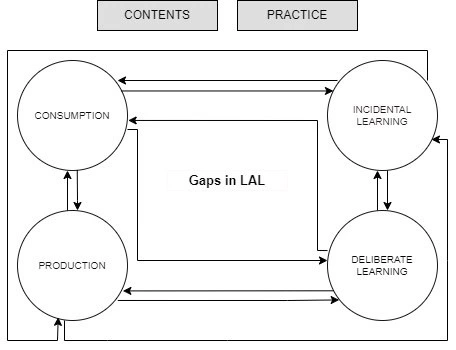

However, such collaborative work is possible because of the availability of local expertise. Many skills are necessary to develop an assessment program, and only part of it is assessment specifics. According to Dimova et al. (2022), it is possible to “draw on local expertise at each stage of test development, implementation, administration, and maintenance” (p. 345). Certainly, assessment staff can benefit from this knowledge-sharing environment. In Monteiro (2023), I reflected on the sources of language assessment literacy (see Figure 1). It emerges not only from consuming content (courses, books, articles, test reports) but also through knowledge production, practice, and incidental learning. Deliberate learning through assessment projects fosters rich environments and fills the gaps in language assessment literacy, nurturing expertise acquisition for individuals and groups.

Figure 1. Sources of Language Assessment Literacy

Another aspect of the book is the authors’ view of validation as an ongoing process. In contrast to large-scale exams, where pilot and field tests are rigorously carried out with hundreds or even thousands of participants, “local tests seldom have the opportunity to conduct extensive embedded pre-testing” (Dimova et al., 2020, p. 60). In such cases, exploratory and small-scale piloting are the options left for local tests. As a result, local language testers practice “an extended approach” (Dimova et al., 2022, p. 347), which means that data are collected after each test application. The analysis of such data could contribute to a better understanding of the test performance.

Despite such recommendations, other critical issues might impact the validation of local tests. As I have discussed elsewhere, assessment practices are embedded in drivers and constraints (Monteiro, forthcoming). Drivers are the motivating factors that foster a practice, while constraints refer to the limiting aspects that may prevent the full attainment of intended results. An institutional policy to assess students or budget for the assessment project are examples of drivers, whereas miscomprehension of assessment procedures by stakeholders is an example of such a constraint. Paradoxically, the same people can be the source of drivers and constraints as they support assessment projects but make them unreasonable. Therefore, despite the authors’ sound advice, context values can impact how far local testers can go to hold these validation and development recommendations. This calls for more language assessment literacy initiatives that address educators’ needs (Gan & Lam, 2022). The challenge lies in overcoming the barriers of each department and the test development phase to facilitate the workflow of test developers.

Yet, teachers can benefit greatly from the book. They can consider its recommendations for planning, delivery, and analysis of tests. They can explore insights from test data to improve teaching. They can even set a time to discuss the book chapters and monitor their progress in improving assessment practices. Local language testing will certainly be a source of inspiration and guidance for teachers.

References

Dimova, S., Yan, X., & Ginther, A. (2022). Local tests, local contexts. Language Testing, 39 (3), 341–354. https://doi.org/10.1177/02655322221092392

Dimova, S., Yan, X., & Ginther, A. (2020). Local language testing: Design, implementation, and development. Routledge.

Gan, L., & Lam, R. (2022). A review on language assessment literacy: Trends, foci and contributions. Language Assessment Quarterly, 19(5), 503–525. https://doi.org/10.1080/15434303.2022.2128802

Monteiro, N. P. (2023, May 27). Conceptualising the sources of language assessment literacy: A context-driven approach [Paper presentation]. New Directions LATAM 2023, São Paulo, Brazil.

Monteiro, N. P. (forthcoming). A conceptual framework to contextualise local language assessment literacy. In B. Baker & L. Taylor (Eds.), Language assessment literacy and competence: Research and reflections from the field. Cambridge University Press.

Newton Paulo Monteiro is an evaluation coordinator and lecturer of languages at Centro Universitário Alfredo Nasser, Brazil. His research interests include local assessment development and language assessment literacy.

Newton Paulo Monteiro is an evaluation coordinator and lecturer of languages at Centro Universitário Alfredo Nasser, Brazil. His research interests include local assessment development and language assessment literacy.